In Pursuit of a Perfect TTO

This is an extended essay attempting to summarize what's broken in university technology transfer—across universities, research labs, and academic institutions more broadly.

There's a lot of noise in this space. Most of it clusters around the same themes: spin-outs don't happen efficiently, processes are slow, and everyone has an opinion about greed vs speed.

This piece is my attempt to do two things:

1. Describe the system failures in a way that matches what it feels like to operate inside them, and

2. Present a solution that fits into the larger proposal of unbundling the university, as written by Ben Reinhardt.

What is a perfect TTO?

"One of the basic rules of the universe is that nothing is perfect. Perfection simply doesn't exist… Without imperfection, neither you nor I would exist."

- Stephen Hawking

With that caveat in mind, let's start with a simple question:

If you removed constraints of budget and talent, what would a perfect tech transfer office actually look like?

This question is useful not because it indulges idealism, but because it exposes how much of today's dysfunction is treated as inevitable. If you imagine a TTO without inherited limits, you're forced to separate true constraints from habits, incentives, and legacy design choices.

In a perfect TTO:

- Every disclosed invention is given a credible path to real-world use.

- The office is a place people want to work with—and work for.

- Companies actively seek out university technologies rather than being "marketed" to.

- Researchers engage early and speak openly about positive experiences.

- TTO staff are known for speed, clarity, and execution—and are in demand elsewhere.

- University leadership treats the TTO as core institutional infrastructure, not a back-office function, and recognizes its role in turning research into real outcomes.

When I shared this description with friends working inside TTOs, one of them joked that I should add "world peace" to the list.

The gap between this vision and current practice feels unbridgeable. But that gap is precisely the point. In this piece, I argue the ideal is not utopian. The failures are not primarily cultural, individual, or financial.

They are systemic.

What follows examines the constraints that prevent technology transfer from working as intended—and outlines why unbundling the system makes this version of a TTO not only plausible, but inevitable.

In the debates and arguments around tech transfer, there are three seminal works:

1. Unbundling the University — Ben Reinhardt, Speculative Technologies, Feb 2025

2. America's Innovation Pipeline Is Leaking — Renaissance Philanthropy. Written by Orin Herskowitz, Dmytro Pokhylko, and Kumar Garg

3. Spinout.fyi — Nathan Benaich, AirStreet Capital. First and most reliable open source data of founders from academia globally

Ben uses a bundling analogy: a coffee shop + laundromat + karaoke bar all merged into one space because it made sense historically. But none of the individual functions work properly because the combined system is optimized for none.

Renaissance uses the leaky pipe: the innovation pipeline leaks at dozens of points (they list 60+ in the U.S. system alone), and the fix isn't more water—it's fixing the leaks.

Introduction

If you replace "university" for "technology transfer" in Ben's essay, the logic holds perfectly. The TTO problem fits thematically with the larger proposal of unbundling the university.

Tech transfer is a tricky thing. Almost everybody touches it at some point: filing an invention disclosure, negotiating a license, watching a promising technology languish in "tech transfer limbo," or wondering why the breakthrough in the lab next door never made it to market.

Across the board—from administrators to researchers to industry partners to venture capitalists—there's broad agreement that:

1. Tech transfer is important

2. The current system is not working well

3. Reform is needed

But there's strong disagreement about:

1. Why tech transfer matters (economic development? social impact? revenue?)

2. What is broken (understaffing? incentives? legal complexity? risk?)

3. How it should change (more funding? new policy? different metrics?)

It's a blind-men-and-an-elephant situation. Each stakeholder is holding the piece of the system closest to their priorities:

- Researchers experience bureaucracy and delay

- Administrators see liability and risk

- Industry sees slow negotiation and unclear ownership

- Policymakers see weak outputs

- VCs see friction, confusing terms, and often—yes—greed

The list goes on.

And to borrow from Ben's conclusion:

The solution is not to burn the system down. Unbundling a large, entrenched institution is a monumental task.

The aim of this piece is narrower: fix one function and make it globally scalable.

The four systemic failures inside a TTO

There are four fundamental systemic failures in most TTO setups today:

1. Uncertainty

Outcomes can't be guaranteed. Success often depends on aligning external company interest with internal institutional processes. No other department in a university operates under the same uncertainty.

This uncertainty, combined with the risk-averse nature of academia, leads to a predictable pattern: risk is managed through oversight and bureaucracy.

2. Opacity

For inventors and external partners, there is often no visibility into:

- what stage a case is in

- how long the next step will take

- who is responsible

Delegations of authority, approval chains, and inconsistent communication create confusion and frustration. Licensing professionals—at the coal face—take the heat.

3. Overworked core

Licensing professionals coordinate the entire journey: inventors, internal stakeholders, administrators, internal/external legal, and companies. They are required to "influence without authority," while managing large, diverse portfolios.

The workload is also highly variable—tied to academic calendars, grant cycles, and industry trends. That variability makes it almost impossible to optimize processes or implement systematic improvements.

4. Lack of tools

Most tech transfer software is built for reporting and compliance, not execution. Data gets entered after the work is done. The tools don't streamline operations—they add administrative burden to an already stretched team.

This is a scaling problem. The volume of potentially commercializable research exceeds the capacity to evaluate, protect, and market it—especially given the seasonal arrival of disclosures and the manual nature of the work. This isn't a funding problem solved by hiring more staff. It's structural.

Size matters

Let's do a rough segmentation of TTOs by size:

Example: MIT TLO (an XL office)

MIT's Technology Licensing Office (TLO) is a great example of the volume, sizing and publicly available report.

MIT TLO

MIT TLO Team

Two immediate tells:

1. Admin/support outnumbers the core licensing team

2. The volume is enormous relative to the core team

Even basic math makes the point. If reviewing a disclosure takes 10–40 hours, and we use a conservative 20 hours average, that's:

684 × 20 = 13,680 hours of evaluation work.

Spread across 21 licensing staff (16 staff + 3 Team leads + 2 interns), that's roughly 4 months per person per year just on evaluating new disclosures—before you factor in:

- patent prosecution and office actions

- marketing and outreach

- NDAs, MTAs and other agreements

- negotiation cycles

- internal approvals

- portfolio maintenance

- reporting and CRM updates

And the workload isn't evenly distributed. Some departments disclose far more than others. Some licensing professionals carry much heavier loads. That variability makes it worse, not better.

Also, life sciences dominate licensing volume and revenue. Physical sciences and engineering often move slower and license less frequently, because of industry structure and adoption friction.

This pattern isn't unique to MIT. It shows up everywhere.

The human cost of bureaucracy

The systemic problems in technology transfer have human consequences.

Researchers become frustrated with slow processes and learn to route around the TTO—sometimes legally, sometimes not. Licensing professionals burn out from impossible workloads and leave for industry positions. Promising technologies languish and eventually become obsolete, with their potential societal benefit never realized.

More insidiously, the failures become self-reinforcing:

Researchers who have bad experiences stop submitting disclosures (or submit only when legally required), which reduces the TTO's visibility into valuable work. Licensing professionals, lacking time for proactive marketing, never build the industry relationships that would make their jobs easier.

The cycle continues.

What should we measure?

The key number for any tech transfer office should be licenses executed per year.

Revenue and equity are not good performance metrics for the office itself. The TTO doesn't control whether the licensee succeeds, or whether the startup becomes valuable. Those outcomes depend on the company and the founders.

So the most direct measurable "output" the office controls is: executed agreements.

For MIT, that's ~137 licenses/options/year across ~20 licensing staff—about ~7 per staff if averaged. That sounds good on paper, but it hides variance by field and deal complexity.

And again, this is only the "execution count," not the total work required to make those executions happen.

The key metric of number of licenses realigns the focus on revenue generation and not "economic development". Helps frame the questions:

- "How many active cases should a licensing staff handle?"

- "How many licenses should a licensing staff execute per year?"

This shift in metrics reorients culture toward execution—measured in completed deals—rather than vague notions of economic development.

The process is manual all the way down

This is the part most outsiders underestimate.

Licensing professionals don't just evaluate and negotiate. They also:

- chase signatures through approval chains

- manage internal legal reviews

- answer constant status requests ("where is this at?")

- update CRMs designed for reporting, not execution

- restart the loop every time a new company shows interest

Even basic agreements—NDA/CDA, MTA, options, licenses—trigger a similar pattern:

draft → review → approve → route → chase → sign → record → report.

Each step has built-in delay. Delay creates follow-up. Follow-up creates more work. More work reduces proactive execution.

If this isn't a system designed to fail, it's at least a system designed to stall.

The innovation pipeline is broken

Every year the world invests over $700B in R&D. The output is extraordinary discoveries—but a tiny fraction reaches market.

Renaissance argues the problem isn't a lack of innovation. It's failure in the pipeline that connects research to real-world impact. And even in the U.S., with the most developed infrastructure, there are 60+ identifiable leaks.

Adding more water to a leaky system doesn't fix leaks. It increases the amount lost.

In much of the world, the pipes barely exist. Researchers in emerging economies often have no TTO support, no patent support, no pathway. The asymmetry is brutal: a researcher in Boston has an apparatus; a researcher in Nairobi or Manila often has none.

Availability bias follows: we over-focus on the institutions already visible to the system.

Workflow

Disclosure starts with a form. Most of the information already exists in manuscripts, slides, or lab notes—but the form demands it again, often in a way that doesn't match how academics think about their work.

Then there's due diligence: funding agreements, sponsor rights, background IP, joint inventors, inter-institutional issues. The rules are straightforward, but the documents are scattered.

Then evaluation: prior art search, literature, patentability risk, market potential, and judgment calls. Then internal review. Then patent drafting via external firms. Then inventor review. Then filing.

Then marketing: a non-confidential summary, website listing, outreach lists, campaigns, follow-ups, calls. Then NDAs. Then deeper technical diligence. Then commercial value discussions. Then term sheet. Then license negotiation. Then signature routing.

Post-agreement: inventor splits, revenue distribution, royalty reporting, audit trails, payment tracking.

This is heavy, manual work with many touchpoints. The licensing professional carries the complexity.

The system we need must remove manual touchpoints so people can spend time where judgment matters.

Solution

The solution is not "hire more people to do the same work faster." The solution is to fix the leaks so that when you do hire people, they can actually execute.

Yes, this is where AI enters the conversation—and yes, people roll their eyes. Let me try to make the case properly.

The goal is not replacing human judgment. It's augmenting human capacity—handling routine analysis and coordination so licensing professionals can spend time on the high-value work that actually requires expertise.

The problems in tech transfer are structural, not always personnel. Most TTO professionals are competent and dedicated people working inside a system that makes success difficult.

Fixing tech transfer means changing the relationship between:

- Volume of work, and

- Capacity to do it

There are two classical mechanisms:

1. Reduce the work (be more selective)

2. Increase capacity (do the same work faster)

Option 1 is unpalatable.

Practically, it's hard to predict which inventions will matter. Philosophically, the mission is to maximize societal benefit—choosing not to pursue potential impact is hard to justify.

Option 2 is adding more water.

Historically means hiring more staff, which hits budget constraints and talent scarcity—and doesn't solve coordination overhead.

AI offers a third path:

Change the nature of the work, so humans do judgment and relationships while machines handle routine analysis and operational burden.

AI as infrastructure, not replacement

Human judgment remains essential: evaluating potential, negotiating terms, managing relationships, making tradeoffs.

But much of what consumes TTO time is not "human work." It's information retrieval, summarization, drafting standard documents, routing approvals, updating databases after the fact.

AI can do those pieces faster and more consistently, freeing humans to focus on:

- strategic evaluation

- relationship building

- complex negotiation

- portfolio strategy

What is needed: a technology transfer operating system (ttOS)

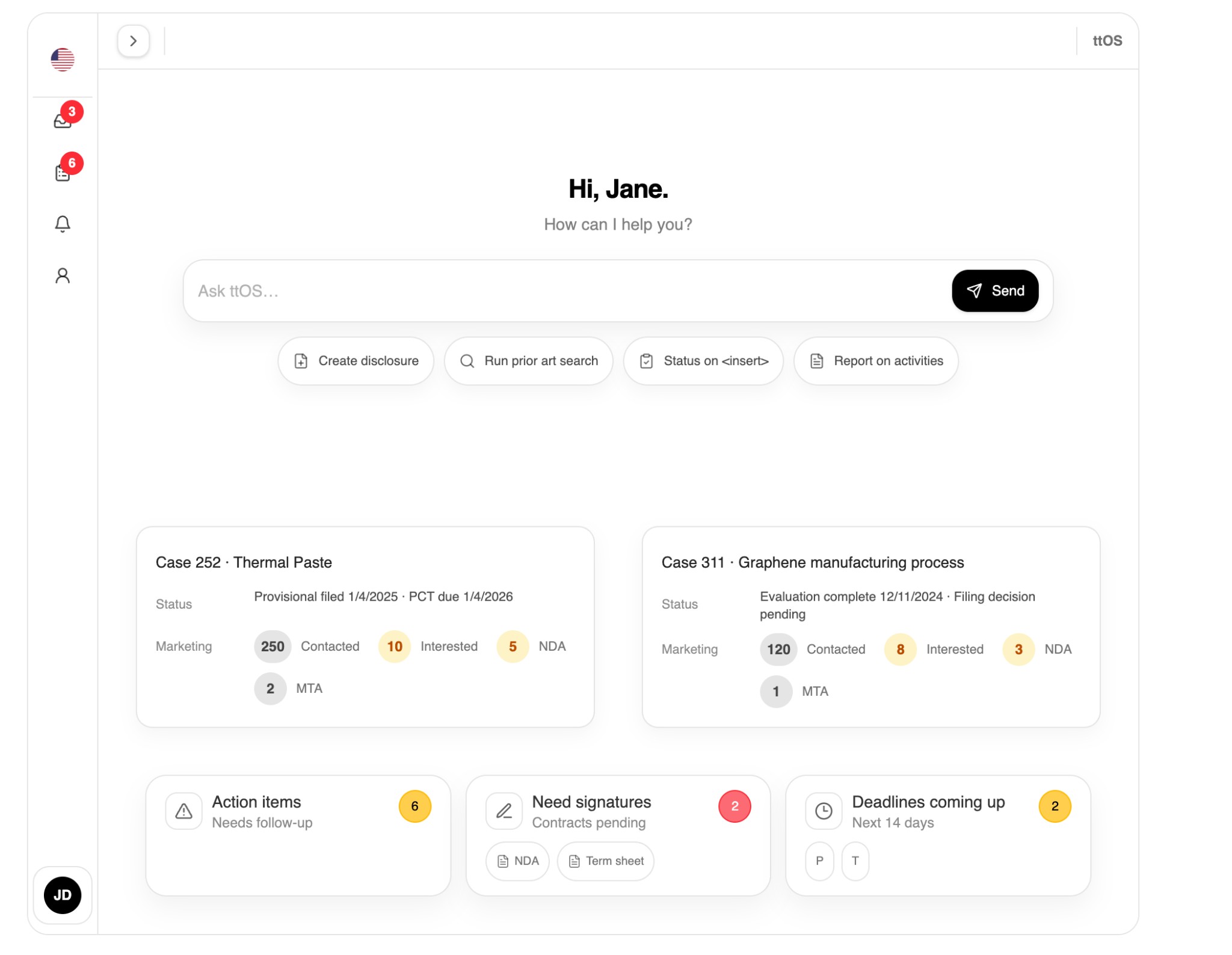

What I'm proposing is a technology transfer operating system: ttOS.

An AI-powered infrastructure that supports tech transfer execution—especially for institutions that can't staff like MIT. And, for XL scale offices, reduction in time of the manual work.

Core capabilities:

1. Core automation modules: Disclosure, Evaluation, Protection, Marketing, and Licensing

2. Dashboards: inventor view + case view; fewer "where is this?" emails

The dashboards provide a single, real-time view of all work in progress and the status of each case. They function as an automatic CRM—capturing activity as it happens and highlighting what needs attention next. Signature workflows are tracked end-to-end, showing who must sign, who is delegated, and who is unavailable, with automatic routing to the next authorized signer.

- Automatic CRM: the work generates the record; no manual updating after the fact

- Signature routing: rules + timeouts + escalation; make bottlenecks visible instead of hidden

Inventor View

The inventor dashboard provides researchers with a clear view of their technology transfer cases. They can see the status of each disclosure, track progress through evaluation and protection stages, monitor marketing activities (contacts, NDAs, MTAs), and view upcoming deadlines. A chatbot interface allows them to create disclosures, run prior art searches, check status, and report activities—all without needing to understand the internal workflow.

Case View

The case view dashboard gives licensing professionals a comprehensive overview of each technology transfer case. It shows:

- Due diligence: Funding agreements, background IP, joint inventors, inter-institutional agreements

- Prior art analysis: Patent references, literature reviews, novelty assessment, claim scope evaluation

- Market research: Comparable deals, royalty ranges, milestone payments, data sources

- Workflow status: Current stage, pending actions, signature requirements, deadlines

All information is automatically captured as work progresses, eliminating the need for manual CRM updates.

3. Agreement orchestration: stop treating each university's NDA as sacred scripture; standardize by geography and tolerance

Why does every university have its own NDA or CDA?

How different, in practice, is an agreement from the University of Iowa compared to one from University College London? Jurisdictional differences between countries matter, of course. But within a single country—or even a single legal system—why can't there be a standard agreement for the UK, the US, or the EU?

If we understand where agreements actually differ, we can make an informed judgment about whether those differences matter and whether the associated risk is tolerable. Most of the variation does not justify bespoke drafting and endless redlining.

With AI, it becomes possible to maintain a standard set of agreements, structured by geography and institutional risk tolerance, and to surface only the true deltas that require attention.

In practice, technology transfer relies on a small, well-defined set of agreement types:

1. Non-Disclosure Agreements (NDAs)— typically in two forms: one-way and mutual

2. Material Transfer Agreements (MTAs)— for sharing research materials

3. Inter-institutional Agreements (IIAs)— governing jointly owned IP, including commercialization lead, cost sharing, and revenue flows

4. Deeds of Assignment— assigning IP from inventors to the university. Although ownership usually arises through employment contracts, court decisions (such as UWA v Gray) have led most universities to require this additional, standardized step

5. Inventor Revenue-Share Agreements— commonly splitting revenue one-third to inventors, one-third to departments, and one-third to the TTO, with the inventor share further allocated based on contribution. Resolving this early avoids downstream conflict

6. Term Sheets— capturing the key financial terms and material clauses to be negotiated before drafting the full agreement

7. Full License Agreements— including options and definitive licensing terms

These are not exotic instruments. They are repeated thousands of times across institutions.

The solution is straightforward: build standard agreement templates usable across a given geography, with explicit, managed variations for jurisdictional differences. Use them consistently. Stop treating each institution's version as unique, and stop paying the coordination cost of endless redlining when the underlying risk is largely the same.

4. Discovery: make inventions genuinely discoverable, not buried on a TTO site

Marketing is the most underdeveloped capability in most TTOs. ttOS turns it from a passive listing function into a proactive engine. Instead of a static one-pager buried on a TTO website, each technology is presented on its own dedicated webpage, with AI-generated content—reviewed and approved by both the licensing manager and the inventor—structured and optimized for discoverability.

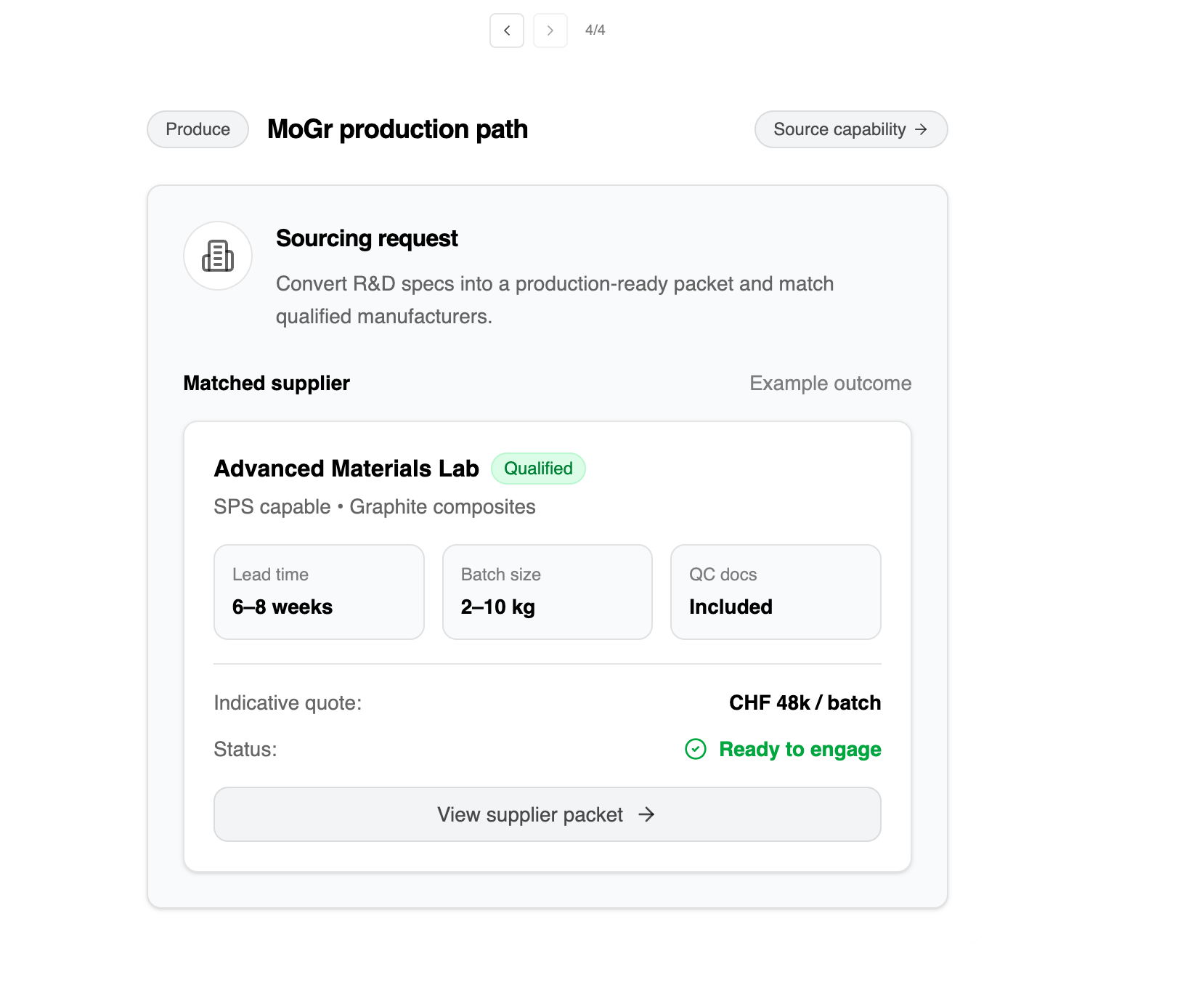

5. Bundling: make it easy to combine related IP across institutions without doubling friction

Most inventions are not valuable in isolation. Their impact often depends on complementary and enabling IP.

For example, a composite material developed at one institution may require an enabling technology from a university in the UK and a scalable manufacturing process developed in Switzerland to become commercially viable.

6. Produce: make early-stage production possible using existing academic infrastructure—ttOS as the execution layer for early translation

Conclusion

Technology transfer matters because research matters. University labs generate insights that could improve human welfare—but potential is not impact. Potential becomes impact only when research reaches people and organizations that can apply it.

The current system is straining under the weight of its own success. More research is produced than ever before, but the mechanisms for translating it have not evolved to match. The result is a widening gap between what's possible and what happens in reality: technologies that sit in queues, stall in negotiation loops, or die because nobody had time to find the right path.

AI will not solve everything. But it can address the capacity constraint that underlies many failures. By automating routine analysis and operational coordination, we can free professionals to do the work that only humans can do—and allow tech transfer to scale with research output instead of falling further behind.

I have built ttOS, an initial implementation of this approach. The platform is currently in private beta, focused on stabilisation and refinement, with a public launch planned for February 2026.

If you are interested in partnering or help, please contact me at ash@ttos.ai.

Gratitude

Thank you to the people who gave feedback on drafts of this piece:

Priyanka Dasgupta, Filipe Ramos, Hafida Boufraioua, Vlad Iorgulescu & Oana Ravikumar.